Artificial intelligence is expanding so quickly that the real competition is no longer about models. It is about computing. Two forces now shape who leads this era: the companies building specialised AI chips and the cloud giants controlling global infrastructure. This quiet battle influences every breakthrough, partnership and business strategy in 2025.

Why AI Compute Became the Real Battleground

Model sizes have grown at an extreme pace, increasing the demand for high-performance chips. Training a frontier model can require thousands of GPUs for months, driving costs into the tens of millions. GPU shortages and rising compute prices have pushed companies toward alternatives that offer faster, cheaper and more predictable performance.

The Players Reshaping AI Hardware

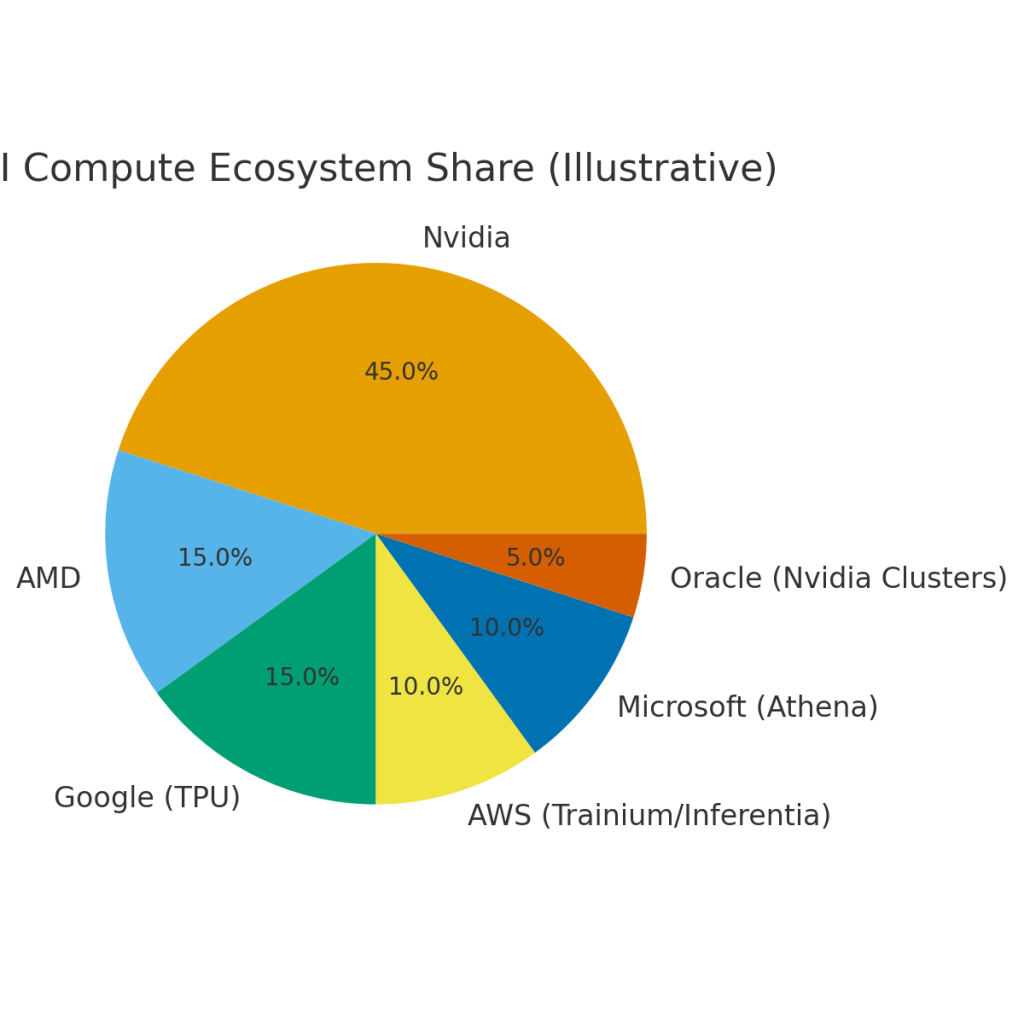

Nvidia remains the dominant supplier of AI chips, defining industry standards and availability cycles. AMD is expanding its footprint through the MI300 series. Google is accelerating TPU development for internal and cloud use. Amazon is scaling Trainium for training and Inferentia for inference. Intel, Cerebras and several chip startups are introducing custom accelerators to close the performance gap and avoid dependency on a single vendor.

Nvidia’s Lead and the Push for Alternatives

Nvidia’s position is strong because its chips, software stack and ecosystem are deeply integrated. But demand is outpacing supply. This has led major cloud providers to design their own chips to improve margins and reduce reliance on Nvidia. AWS Trainium is positioned as a lower-cost training option, while Google uses TPUs to optimise AI workloads across Search, Gemini and YouTube.

The wider market is also expanding. The AI chips market is projected to exceed US $400 billion by 2030.

Additional estimates suggest the broader AI processor market, including chips for data centres and cloud deployments, may reach US $467.09 billion by 2034, highlighting sustained demand across multiple years and use cases.

Cloud Infrastructure: The Second Front in the Compute War

Cloud platforms remain the main way businesses run AI. Microsoft Azure, AWS, Google Cloud and Oracle Cloud supply elastic compute, security, storage and networking. They also provide managed AI services, making it possible to train, deploy and scale without maintaining hardware.

How Cloud Providers Are Creating Their Own Chip Strategies

AWS leads with Trainium and Inferentia. Google continues to build TPUs. Microsoft is reportedly developing its Athena AI chip to diversify compute options. Oracle is betting on high-density Nvidia clusters to support enterprise AI workloads. These strategies help cloud providers control availability, reduce cost and deliver optimised compute combinations for different AI tasks.

Also read this: AI Won’t Replace You. But Someone Using AI Will.

The New Competitive Alliances

Cloud providers are securing partnerships with model developers and hardware vendors to guarantee long-term compute capacity. Examples include Microsoft with OpenAI, Google with Anthropic and Oracle with Nvidia. These alliances influence how fast models are trained, who gets compute first and which platforms attract enterprise AI spending.

The Startup Challenge: Cost, Lock-In and Survival

Startups face rising training costs and long wait times for GPUs. Many depend on a single cloud provider and struggle to shift workloads once they scale. This makes pricing, chip selection and infrastructure decisions critical for survival.

Hybrid Strategies: When Chips and Cloud Work Together

Most companies are combining dedicated AI chips for predictable workloads with cloud compute for flexible or experimental tasks. This mix helps balance performance, cost and availability.

What This Means for the Industry

The outcome of this silent war affects operational costs, data strategies, product timelines and long-term competitiveness. Businesses must understand not just AI models but also the hardware choices shaping them.

Conclusion

The future of AI will be defined by those who control compute. The real advantage lies in how organisations balance specialised chips with cloud infrastructure to meet performance demands and stay ahead.